In the interconnected software ecosystem, APIs serve as the critical connective tissue, enabling disparate systems to communicate, share data, and deliver powerful functionality. A poorly executed integration, however, can quickly become a significant liability. It can introduce security vulnerabilities, create performance bottlenecks, and lead to system failures that disrupt business operations and degrade user experience. Simply connecting two endpoints is not enough; building a robust, secure, and scalable integration requires a deliberate and strategic approach.

This guide provides a comprehensive roundup of essential api integration best practices that modern development teams must implement. We will move beyond surface-level advice to provide actionable insights on building resilient and efficient connections. To fully grasp these best practices, it’s beneficial to first understand the fundamental concepts of API integration. Understanding the basics will provide a solid foundation for the advanced techniques we’ll explore.

Throughout this article, we will delve into seven critical areas, offering practical guidance and real-world examples. You will learn how to:

- Implement proper authentication and authorisation.

- Design with rate limiting and throttling.

- Implement comprehensive error handling and logging.

- Use API versioning strategies.

- Implement effective caching strategies.

- Design for idempotency to prevent duplicate operations.

- Implement the circuit breaker pattern for improved resilience.

By mastering these principles, you can ensure your integrations are not fragile points of failure but strong, reliable bridges that support your application’s growth and stability.

Table of Contents

1. Implement Proper Authentication and Authorization

Effective API integration begins and ends with robust security. At its core, this involves two distinct but related processes: authentication (verifying who a user or system is) and authorisation (determining what they are allowed to do). Neglecting this fundamental step is like leaving the front door of your digital infrastructure wide open, exposing sensitive data and critical functionalities to potential misuse. This is a cornerstone of API integration best practices, as it establishes the necessary trust and control for secure data exchange.

Authentication confirms the identity of the client application, service, or user making the request. Authorisation, which follows successful authentication, grants or denies permission to access specific resources or perform certain actions. For instance, an authenticated user might be authorised to read data but not to delete it.

Common Authentication and Authorisation Mechanisms

Choosing the right security model depends on your API’s use case, the sensitivity of the data, and the user experience you want to provide.

- API Keys: This is the simplest method, where a unique string is assigned to a developer or application. It’s effective for tracking API usage and identifying the calling application. Stripe’s API, for example, uses API keys to authenticate requests from merchant platforms, ensuring that only verified merchants can process payments.

- OAuth 2.0: An industry-standard protocol for authorisation, OAuth 2.0 allows applications to obtain limited access to user accounts on an HTTP service. It is ideal for scenarios where a third-party application needs to act on behalf of a user without obtaining their credentials. Google’s APIs heavily rely on OAuth 2.0 to let users grant apps like calendars or email clients secure, scoped access to their data.

- JSON Web Tokens (JWT): JWTs are a compact, self-contained way for securely transmitting information between parties as a JSON object. This information can be verified and trusted because it is digitally signed. JWTs are often used in single sign-on (SSO) scenarios and are popular in modern web applications for stateless authentication.

Actionable Tips for Implementation

To ensure your security measures are effective, consider these critical practices:

- Enforce HTTPS: Always transmit tokens and API keys over an encrypted HTTPS connection to prevent man-in-the-middle attacks.

- Implement the Principle of Least Privilege (PoLP): Grant only the minimum permissions necessary for an application or user to perform its function. GitHub’s fine-grained personal access tokens are a great example, allowing users to define exactly what a token can and cannot do.

- Securely Store Credentials: Never hardcode API keys or secrets in your client-side code. Use environment variables, secure vaults like HashiCorp Vault, or services like AWS Secrets Manager. For specific guidance on securing access to your APIs, you can refer to established API Keys Best Practices.

- Use Token Expiration: Set short lifespans for access tokens and implement a refresh token mechanism to obtain new ones without requiring user re-authentication. This limits the window of opportunity for attackers if a token is compromised. Learn more about how to secure your API integrations to enhance your data protection strategies.

2. Design with Rate Limiting and Throttling

A crucial aspect of building and consuming APIs involves managing the flow of traffic to prevent overload and ensure fair usage. Rate limiting and throttling are control mechanisms designed to limit the number of API requests a client can make within a specific time window. Implementing these controls is not just about protecting the API provider’s infrastructure; it’s a fundamental API integration best practice that guarantees service stability and quality for all consumers.

Rate limiting restricts the request frequency from a single client, while throttling can slow down requests once a limit is approached. This proactive approach prevents any single integration from monopolising system resources, whether due to a runaway script, a spike in user activity, or a malicious denial-of-service attack. A well-designed rate limiting strategy maintains API health and ensures a predictable performance baseline.

Common Rate Limiting and Throttling Strategies

API providers implement various policies based on their service architecture and business models. These limits are often tiered, offering higher thresholds for paid plans.

- Request Quotas: Many APIs enforce limits over different time periods. The Twitter API, for instance, limits the number of tweets an application can post within a specific 15-minute window, ensuring real-time platform stability. Similarly, GitHub provides a generous 5,000 requests per hour for authenticated users to support developer workflows.

- Concurrent Requests: Some systems limit the number of parallel requests a client can have open at any given time. This is common in resource-intensive APIs to prevent a single client from tying up too many server-side processes.

- Geographic and Usage-Based Quotas: Services like the Google Maps API employ sophisticated limits, including daily quotas and requests-per-second restrictions, which can vary based on the specific endpoint being called. This ensures high-demand services remain available globally. Stripe also uses advanced rate limiting to protect its payment processing infrastructure from bursts of traffic.

Actionable Tips for Implementation

Both API providers and consumers must be mindful of rate limits to build resilient integrations.

- Provide Clear Rate Limit Information: API responses should include headers like

X-RateLimit-Limit(total requests allowed),X-RateLimit-Remaining(requests left in the window), andX-RateLimit-Reset(when the window resets). This transparency allows developers to build adaptive clients. - Implement Exponential Backoff: When a client receives a

429 Too Many Requestserror, it shouldn’t retry immediately. Instead, it should use an exponential backoff algorithm, waiting for progressively longer periods between retries to avoid overwhelming the server. - Use Distributed Rate Limiting for Scaled Applications: If your application is deployed across multiple servers, a centralised store like Redis is needed to track request counts accurately and enforce limits consistently across your entire infrastructure.

- Differentiate Limits by Endpoint: Not all API endpoints are equal. A read-only endpoint is less resource-intensive than one that triggers complex data processing. Apply stricter limits to more demanding endpoints.

3. Implement Comprehensive Error Handling and Logging

Even the most well-designed API integrations can fail. A robust system for handling API errors gracefully is not just a reactive measure but a proactive strategy for building resilient and user-friendly applications. Comprehensive error handling and logging ensure that when issues arise, they are communicated clearly to the client and recorded effectively for debugging and analysis. This approach is a critical component of API integration best practices, transforming potential failures into opportunities for improvement and maintaining a high-quality user experience.

Effective error handling involves providing clear, meaningful error messages that help developers understand what went wrong. Logging, on the other hand, is the practice of recording these events and other system activities. Together, they create a feedback loop that is essential for monitoring API health, identifying bugs, and improving overall reliability.

Key Components of Error Handling and Logging

A mature error management strategy goes beyond simple try-catch blocks and generic error codes. It involves a systematic approach to classifying, communicating, and capturing error data.

- Meaningful HTTP Status Codes: Use standard HTTP status codes correctly to indicate the nature of the response. For example, use

400 Bad Requestfor client-side input errors,401 Unauthorizedfor authentication failures,403 Forbiddenfor authorisation issues, and500 Internal Server Errorfor unexpected server-side problems. - Structured Error Responses: Provide a consistent, machine-readable error format, typically in JSON. This response should include a unique error code, a human-readable message, and potentially a link to documentation. The Shopify API excels here, providing structured responses that detail which specific input fields caused a validation error.

- Detailed Logging: Capture sufficient context in your logs to reconstruct an issue. This includes the request payload (with sensitive data redacted), response details, timestamps, and a unique identifier to trace the request’s journey. Centralised logging platforms like the ELK Stack (Elasticsearch, Logstash, Kibana), popularised by Elastic, are invaluable for aggregating and searching through logs from multiple services.

Actionable Tips for Implementation

To build a fault-tolerant integration, consider these essential practices:

- Use Correlation IDs: Assign a unique ID to every incoming request and pass it through all internal service calls. This allows you to trace a single transaction across a distributed system, making debugging significantly easier. AWS APIs often include a

Request IDin their error responses for this purpose. - Implement Different Log Levels: Use various log levels (e.g., DEBUG, INFO, WARN, ERROR) to categorise the severity of events. This enables you to filter logs effectively, focusing on critical errors during an outage or examining detailed debug information during development.

- Never Log Sensitive Data: Ensure that confidential information like passwords, API keys, or personal user data is always filtered out or masked before being written to logs to maintain security and compliance.

- Adopt Centralised, Structured Logging: Use a structured format like JSON for your logs and send them to a centralised system like Splunk, Datadog, or Sentry. This simplifies parsing, searching, and creating dashboards to monitor API health. You can learn more about managing complex systems to streamline your operational efficiency.

4. Use API Versioning Strategies

APIs, like any software, evolve. New features are added, existing functionalities are improved, and occasionally, breaking changes are necessary. A robust versioning strategy is essential for managing this evolution gracefully. It allows you to introduce updates and improvements without disrupting existing client integrations, which is a critical aspect of API integration best practices. Failing to version your API can lead to a chaotic and fragile ecosystem where a single update can break countless dependent applications.

API versioning is the practice of managing changes to your API in a way that provides a clear and predictable path for developers. It ensures that consumers of your API can continue using an older, stable version while you roll out new features or make structural changes. This dual support provides stability for your users and flexibility for your development team.

Common Versioning Approaches

Choosing a versioning method depends on your API’s complexity, your audience, and your long-term roadmap. The key is to select one and apply it consistently.

- URL Path Versioning: This is one of the most common and straightforward methods. The version number is embedded directly into the URL path, for example,

https://api.example.com/v1/resource. The GitHub API famously uses this approach (e.g.,api.github.com/v3/), making it immediately obvious which version of the API a client is interacting with. - Header Versioning: Here, the version is specified in a custom request header, like

Accept: application/vnd.myapi.v2+json. This approach keeps the URIs clean and unchanged across versions but requires clients to be more deliberate in setting their request headers. - Query Parameter Versioning: A version number can also be passed as a query parameter in the URL, such as

https://api.example.com/resource?version=2. While simple to implement, this method can clutter URLs and is less frequently used for major version changes. - Date-Based Versioning: Popularised by Stripe, this strategy involves versioning the API based on the date of its release (e.g., specifying a

Stripe-Versionheader with a date like2022-11-15). This allows for continuous, non-breaking updates while enabling clients to opt-in to newer, potentially breaking changes by updating their version date.

Actionable Tips for Implementation

To implement versioning effectively, your strategy must be clear, well-documented, and predictable for your users.

- Be Consistent: Once you choose a versioning strategy, stick with it. Inconsistency creates confusion and makes it difficult for developers to build reliable integrations.

- Provide Clear Migration Guides: When you introduce a new version, publish comprehensive documentation that details what has changed and provides a clear, step-by-step migration path from the older version. Google’s API documentation is a great example of this.

- Implement a Sunset Policy: Clearly communicate the lifecycle of each API version. Announce deprecation plans well in advance, giving developers ample time to migrate. A formal sunset policy builds trust and prevents sudden disruptions.

- Maintain a Detailed Changelog: A public, chronological changelog is invaluable. It serves as the primary source of truth for all changes, bug fixes, and new features, helping developers stay informed.

- Use Semantic Versioning: For communicating the nature of changes, adopt semantic versioning (MAJOR.MINOR.PATCH). This helps developers understand the impact of an update at a glance: MAJOR for breaking changes, MINOR for new features, and PATCH for bug fixes. This approach is fundamental to maintaining a stable yet evolving API ecosystem and ensuring regulatory alignment. Understanding these principles is a key part of API compliance management.

5. Implement Caching Strategies

In high-performance API integrations, speed is paramount. Caching is a critical technique that dramatically improves response times, reduces server load, and lowers operational costs by storing copies of frequently accessed data closer to the consumer. By serving data from a high-speed cache instead of the original data source, you can avoid redundant, expensive operations like database queries or calls to other services. This makes implementing caching strategies one of the most impactful api integration best practices for creating scalable and responsive systems.

Caching involves temporarily storing API responses so that subsequent identical requests can be served much faster. When a request comes in, the system first checks the cache. If a valid, fresh copy of the response exists (a “cache hit”), it is returned immediately. If not (a “cache miss”), the request proceeds to the origin server, and the response is then stored in the cache for future requests.

Common Caching Mechanisms

The right caching strategy depends on data volatility, traffic patterns, and architectural design. Different layers of caching can be combined for maximum effect.

- Content Delivery Network (CDN) Caching: Services like Cloudflare or Akamai cache API responses at edge locations around the globe. This is ideal for public, static, or slowly changing data, as it serves content from a location physically closer to the user, significantly reducing latency.

- In-Memory Caching: Using tools like Redis or Memcached, you can store frequently accessed data directly in your application’s server memory. This is extremely fast and perfect for caching results of complex database queries or computations. Facebook, for instance, relies heavily on in-memory caching to power its social graph and deliver news feeds quickly.

- API Gateway Caching: Many API gateways, such as Amazon API Gateway, offer built-in response caching capabilities. This allows you to cache responses at the API layer for a configured time-to-live (TTL), reducing the number of calls made to your backend services.

Actionable Tips for Implementation

To build an effective and efficient caching layer, consider these key practices:

- Set Appropriate Time-to-Live (TTL): The TTL determines how long a response remains in the cache. Set a short TTL for highly volatile data and a longer TTL for static data to ensure users receive up-to-date information without overloading the origin server.

- Use ETags for Conditional Requests: Implement ETags (entity tags) in your API responses. An ETag is an identifier for a specific version of a resource. Clients can send this ETag in a subsequent request, and if the resource hasn’t changed, the server can respond with a

304 Not Modifiedstatus, saving bandwidth. - Monitor Cache Performance: Continuously track metrics like cache hit and miss rates. A low hit rate may indicate that your caching strategy is ineffective, your TTL is too short, or the cache size is too small. Use this data to fine-tune your approach.

- Develop a Clear Cache Invalidation Strategy: Decide how you will remove or update stale data from the cache. This could be TTL-based, event-driven (e.g., clearing a user’s data from the cache when they update their profile), or manual. A poor invalidation strategy can lead to users seeing outdated information.

6. Design for Idempotency

In the unpredictable world of network communication, requests can fail, time out, or be duplicated. Designing for idempotency ensures that repeating the same API request multiple times produces the same result as making it just once. This prevents unintended side effects, such as accidentally charging a customer twice or creating duplicate records. Embracing this concept is a critical API integration best practice for building reliable and resilient systems, particularly for crucial operations involving financial transactions or data modifications.

Idempotency provides a safety net for clients. If a client sends a request but doesn’t receive a response due to a network glitch, it can safely retry the request without worrying about creating a duplicate transaction or resource. The API server recognises the retried request and returns the original result without re-processing it.

Common Idempotency Mechanisms

The most common approach to achieving idempotency is through a unique key, often sent in the request header, that the server uses to identify and de-duplicate requests.

- Idempotency-Key Header: This widely adopted method involves the client generating a unique identifier (like a UUID) and including it in a custom header, such as

Idempotency-Key. The server stores the result of the first request associated with this key. Subsequent requests with the same key will receive the stored response without re-executing the operation. Stripe famously uses this pattern to prevent duplicate charges, giving developers confidence when handling payments. - Idempotent Resource Creation: For APIs that create resources, idempotency can be built into the business logic. For example, AWS APIs often support idempotent resource creation. If you attempt to create a resource (like an S3 bucket) that already exists with the same name and parameters, the API returns a success response with the details of the existing resource instead of an error.

- Transaction-Specific Identifiers: Payment processors like PayPal and Square use transaction-specific identifiers to ensure that payment processing is idempotent. This prevents a single purchase from resulting in multiple charges, even if the client’s system retries the API call.

Actionable Tips for Implementation

To properly implement idempotency in your API, consider these essential practices:

- Use a Unique Idempotency Key: Clients should generate a sufficiently unique key, such as a UUID or a hash of the request payload, for each distinct operation. This key should be sent in a request header (e.g.,

Idempotency-Key). - Set a Sensible Expiration for Keys: Store idempotency keys and their corresponding responses for a reasonable period, like 24 hours. This prevents the server from having to store them indefinitely while still protecting against recent retries.

- Clearly Document Idempotent Operations: Your API documentation must specify which endpoints support idempotency and how clients should generate and send the key. This clarity is vital for developers integrating with your API.

- Implement at the Business Logic Layer: True idempotency should be handled within your core business logic, not just at the network or controller layer. This ensures the entire operation, from database writes to firing events, is executed only once.

- Handle Concurrent Requests: Implement locking mechanisms to manage concurrent requests that arrive with the same idempotency key. The first request should be processed, while subsequent ones wait for the first to complete before receiving the cached response.

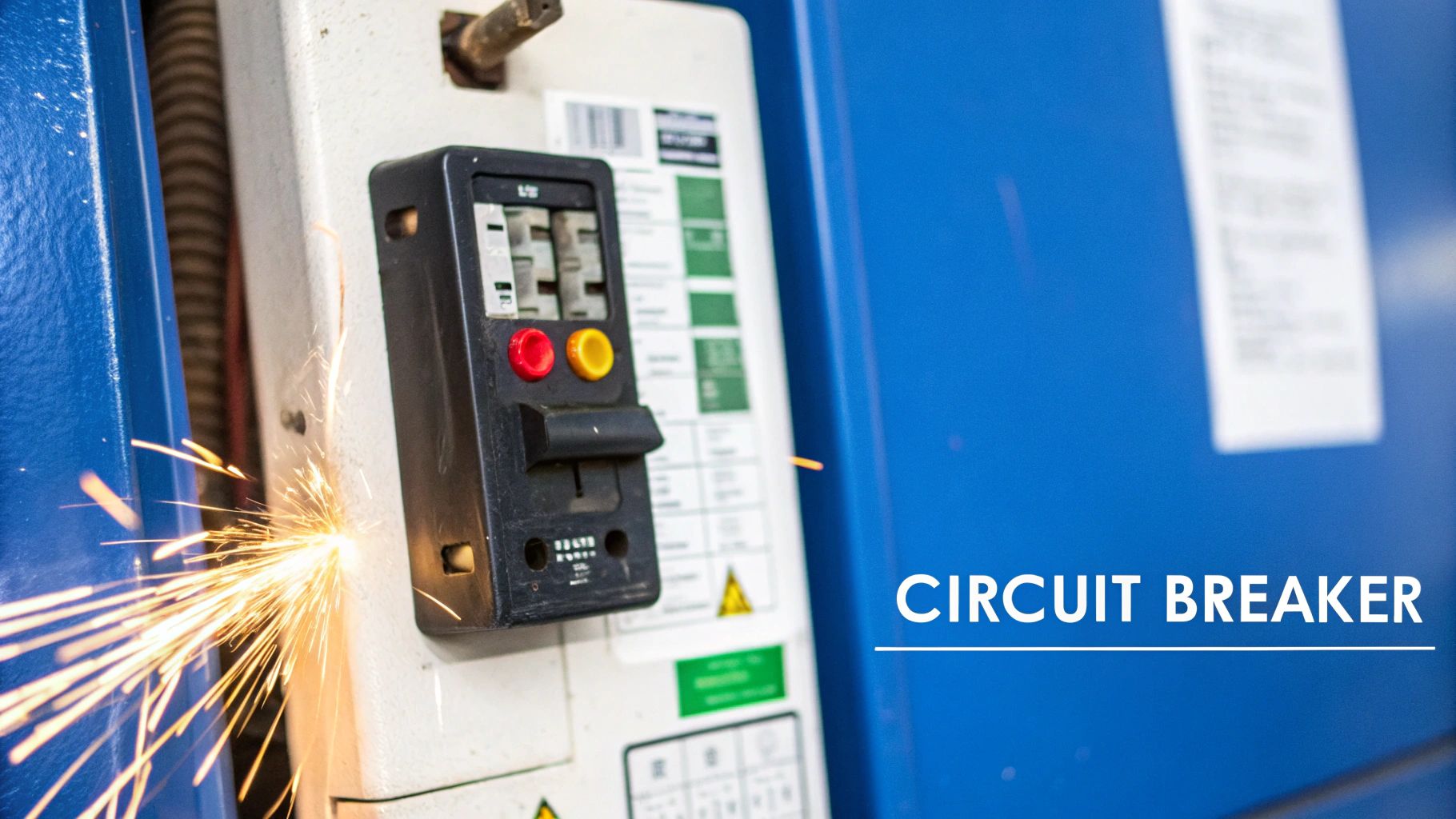

7. Implement the Circuit Breaker Pattern

In a distributed system, where services frequently call one another, a single failing service can trigger a cascade of failures across the entire architecture. The Circuit Breaker pattern is a critical fault-tolerance mechanism that prevents this by acting as a proxy for operations that might fail. This is an essential entry in our list of API integration best practices because it enhances system resilience and stability, preventing a localised issue from becoming a system-wide outage.

The pattern works like an electrical circuit breaker. It monitors calls to a remote service, and if the number of failures crosses a predefined threshold, the circuit breaker “trips” or opens. For a set period, all subsequent calls to the failing service are immediately blocked without being attempted, allowing the downstream service time to recover. After a timeout, the breaker enters a “half-open” state, allowing a limited number of test requests through. If these succeed, the breaker closes and resumes normal operation; if they fail, it remains open.

Real-World Application of Circuit Breakers

This pattern is not just a theoretical concept; it’s a cornerstone of modern microservices architecture, popularised by tech giants to maintain high availability.

- Netflix Hystrix: Netflix originally popularised the concept with its Hystrix library. In their vast microservices ecosystem, Hystrix prevents a failure in a non-critical service (like a recommendations engine) from bringing down essential services like streaming or authentication.

- Shopify: The e-commerce platform integrates with numerous third-party services for payments, shipping, and taxes. Shopify uses circuit breakers to isolate its core platform from failures in these external dependencies, ensuring that a problem with a third-party shipping calculator doesn’t stop customers from completing purchases.

- Uber: With thousands of microservices powering its ride-hailing platform, Uber extensively uses circuit breakers. This ensures that if a service like fare estimation becomes slow or unresponsive, it doesn’t impact critical user-facing functions like booking a ride.

Actionable Tips for Implementation

To effectively deploy the Circuit Breaker pattern in your API integration strategy, consider the following practices:

- Set Realistic Failure Thresholds: Configure the failure count or percentage based on historical performance data and the specific service’s SLOs (Service Level Objectives). A threshold that is too low may trip the breaker unnecessarily, while one that is too high might not prevent a cascading failure.

- Implement Meaningful Fallbacks: When the circuit is open, don’t just return a generic error. Provide a graceful fallback response, such as returning cached data, default values, or a user-friendly message. This improves the user experience during a service degradation.

- Use Exponential Backoff for Recovery: When the breaker is in a “half-open” state, use an exponential backoff strategy for retry attempts. This prevents a thundering herd of requests from overwhelming a service that is just beginning to recover.

- Combine with Other Resilience Patterns: Circuit breakers work best when combined with other patterns like retries and timeouts. A retry mechanism can handle transient network glitches, while the circuit breaker handles more persistent service failures.

API Integration Best Practices Comparison

| Item | Implementation Complexity | Resource Requirements | Expected Outcomes | Ideal Use Cases | Key Advantages |

|---|---|---|---|---|---|

| Implement Proper Authentication and Authorization | High – multi-layer security, token management | Moderate – secure storage and rotation | Strong protection of sensitive data and access control | APIs requiring secure access, user permission control | Granular access, scalable security, industry compliance |

| Design with Rate Limiting and Throttling | Medium – tuning limits and client handling | Low to Moderate – monitoring infrastructure | Prevent abuse, ensure fair usage, maintain stability | Public APIs with heavy consumer traffic, multi-tier access | Protects infrastructure, fair resource distribution |

| Implement Comprehensive Error Handling and Logging | Medium – standardized formats and log storage | Moderate – log management and storage | Improved debugging, monitoring, and audit readiness | APIs needing reliability, debugging support | Enhances dev experience, proactive issue detection |

| Use API Versioning Strategies | Medium – version maintenance and docs | Low to Moderate – documentation and support | Enables API evolution without breaking clients | APIs evolving with multiple client versions | Backward compatibility, smooth migrations |

| Implement Caching Strategies | Medium – multiple cache layers and invalidation | Moderate – caching infrastructure | Improved performance, reduced server load | Performance-critical APIs with repeatable data requests | Faster responses, cost savings, scalability |

| Design for Idempotency | Medium to High – server state management | Moderate – state and key storage | Safe retries, reduced duplicates | Payment APIs, data modification operations | Reliability, safe retry, prevents duplicate effects |

| Implement Circuit Breaker Pattern | High – state management, thresholds | Moderate to High – monitoring and failover | Prevents cascading failures, system resilience | Distributed microservices, unstable dependent services | Fault tolerance, graceful degradation, resource savings |

Integrating with Confidence: Your Path Forward

Navigating the complex landscape of API integration can seem daunting, but the journey from disjointed systems to a cohesive digital ecosystem is built upon a foundation of proven principles. The seven API integration best practices we have explored are not merely technical items on a checklist; they are the strategic pillars that support robust, scalable, and secure application networks. By moving beyond basic connectivity, you transform your integrations from potential points of failure into sources of genuine competitive advantage.

Recapping our journey, we started with the non-negotiable gatekeepers of your system: proper authentication and authorisation. We then moved to ensuring stability and fairness with rate limiting and throttling, preventing any single consumer from overwhelming your resources. We embraced a proactive stance on failure by implementing comprehensive error handling and logging, turning unexpected issues into valuable, actionable insights.

The path forward was further clarified by strategies for long-term health and evolution. API versioning provides a clear, manageable path for updates without disrupting existing consumers. Caching strategies were introduced as a powerful tool to enhance performance, reduce latency, and lower operational costs. We addressed the challenge of unreliable networks and duplicate requests by designing for idempotency, ensuring operations can be retried safely. Finally, the circuit breaker pattern equipped our systems with the resilience to gracefully handle downstream service failures, preventing cascading outages and preserving the user experience.

From Theory to Tangible Value

Adopting these API integration best practices is more than an academic exercise; it delivers tangible business value. For startups and SMEs, a well-architectured integration strategy means faster time-to-market and the ability to build sophisticated products by leveraging third-party services without introducing fragility. For large enterprises, these practices are essential for managing complexity at scale, ensuring that the hundreds or thousands of APIs powering business operations are reliable, secure, and maintainable.

Think of it this way: every API call is a promise. It’s a promise of data, a promise of functionality, and a promise of reliability. When you implement robust authentication, you promise security. When you handle errors gracefully, you promise a dependable experience. When you version your API thoughtfully, you promise stability and a clear path for the future. Mastering these concepts is fundamentally about building and keeping these promises, which in turn builds trust with your partners, developers, and end-users. This trust is the currency of the digital economy.

Your Actionable Next Steps

The true measure of knowledge is its application. As you move forward from this article, consider these immediate, actionable steps to put these principles into practice:

- Conduct an Integration Audit: Review one of your existing, critical API integrations. Assess it against the seven practices discussed. Where are the gaps? Is authentication robust enough? Is there a clear rate-limiting policy? How are errors logged and handled?

- Prioritise One Improvement: You do not need to fix everything at once. Select the most critical gap identified in your audit, perhaps a lack of a circuit breaker for an unreliable third-party service, and create a plan to implement it.

- Update Your Development Standards: Codify these API integration best practices into your team’s official development guidelines. Make them part of your code review process, ensuring that new integrations are built correctly from the start.

- Evaluate Your Tooling: Assess whether your current API gateways, monitoring tools, and development libraries adequately support these best practices. Investing in the right tools can significantly accelerate adoption and reduce manual effort.

By systematically applying these lessons, you are not just connecting applications; you are engineering a more resilient, secure, and efficient digital future for your organisation. You are building a system that can withstand unforeseen challenges and scale to meet future opportunities with confidence.

Ready to see these best practices in action? SpringVerify builds its powerful, developer-friendly background verification API on these core principles of security, reliability, and scalability. Integrate with confidence and streamline your hiring workflows by leveraging an API designed for excellence. Explore the SpringVerify API to simplify your background check processes today.